ADAAS Insights

AI SDLC with A-Concept: Why Scrum Is No Longer Enough — And What Comes Next

The way software is built is changing faster than the processes used to manage it. AI is no longer a productivity add-on — it is becoming a delivery participant. This article examines what that shift actually requires from architecture, from process, and from the people who run both.

Key Takeaways

- AI SDLC is not Scrum with a code generator. It is a delivery model where architecture, scope, and validation are made explicit enough for AI to participate reliably.

- The three failure modes of unstructured AI adoption — hallucinations, architecture drift, and ceremony overhead — are all architectural problems, not model problems.

- A-Concept's primitive system provides exactly the structural vocabulary AI execution requires: explicit scope, declared boundaries, and living documentation that never drifts from reality.

- Every Scrum ceremony needs an AI-specific extension — from delegation classification in refinement to AI delivery logs in review.

- The Scrum Master role is the most natural candidate for AI agent augmentation: context management, backlog coherence, and cross-sprint memory are exactly what LLMs do best — when given structured data to work with.

The Uncomfortable Truth About Scrum in an AI World

Scrum was designed in the early 1990s for a very specific type of work: teams of human developers, moving at human speed, producing code line by line. It solved real coordination problems — how to break large projects into manageable cycles, how to align a team around shared goals, how to surface blockers before they became disasters.

It worked. For thirty years, it worked remarkably well.

But the assumption buried inside every Scrum ceremony — every sprint planning, every daily standup, every retrospective — is that the person doing the work is a human being who reads a task, builds context in their head, and writes a solution. That assumption is no longer safe.

When an AI model joins your delivery process, it doesn't "build context in its head." It operates on whatever structured information it can observe in the moment. It makes educated guesses when boundaries are unclear. It produces plausible-looking output that may quietly violate architectural constraints no one thought to specify explicitly. And it moves fast enough that by the time the drift is visible, it has already compounded.

Three specific failure modes emerge when teams apply Scrum to AI-assisted delivery without adaptation:

AI hallucinations that are really architectural gaps. When a model generates code that doesn't fit the system, the instinct is to blame the prompt or the model. But most of the time, the real issue is structural — the model had no reliable way to understand what the system expected. Better prompting helps at the margins. Better architecture solves it at the root.

Architecture that drifts faster than documentation can track. AI-augmented teams produce more code, faster. Traditional documentation cycles — updated at kickoff, ignored thereafter — cannot keep pace. Within months, the map and the territory diverge, and every new feature is built on a fiction.

Ceremonies that slow teams down without adding safety. Sprint planning that doesn't account for AI involvement produces execution gaps. Retrospectives that don't ask "where did the AI context fail?" miss the most important learning signal. The ceremony continues; the value degrades.

Fig. 1 — The same delivery phases, but a fundamentally different execution contract

The AI SDLC Flow: Eight Phases in a Continuous Loop

AI SDLC is not a replacement for Scrum. It is an extension — a set of additional disciplines that make Scrum work reliably in an environment where AI is a delivery participant, not just a tool. The flow itself looks familiar at the surface. What changes is the nature of each phase.

Discovery still asks about business problems, expected outcomes, and risk levels — but those answers now feed into a structural analysis of what parts of the system are touched and what the risk level of delegating execution to AI actually is.

Structuring is where AI SDLC diverges most sharply from traditional practice. Every task that will involve AI execution must be mapped to the architectural primitives of the system — not at a vague level, but specifically: which Concepts, Containers, and Components are in scope; which are explicitly off-limits; what invariants must hold before and after execution; what the expected outputs are. The quality of this mapping is the single greatest predictor of whether AI-generated code fits the system or drifts from it.

AI-readiness classification answers a question that Scrum never had to ask: is this task safe to delegate to AI end-to-end, should it be AI-assisted with human architectural judgment, or must it remain human-led? Making this explicit — in the backlog, before sprint planning — is what turns an honest estimate into an achievable sprint.

Validation in AI SDLC extends beyond passing the test suite. It includes architectural conformance checks — does the generated code respect the structural boundaries that were defined? — alongside invariant checks, security checks, and API contract compatibility. The architectural conformance check is the addition that matters most. It is what catches drift before it compounds, and it is only possible if the architecture is explicit enough to check against.

Fig. 2 — The AI SDLC loop: eight phases with a structured feedback path back into A-Concept

AI-Readiness Classification: The Honest Backlog

Not every task should be delegated to AI. One of the most important contributions of AI SDLC is making this distinction explicit in the backlog, rather than leaving it to each developer's individual judgment sprint by sprint.

A practical three-tier model separates work by the degree of AI involvement that is structurally safe:

AI-safe tasks can be delegated end-to-end with lightweight human review. CRUD additions, UI extensions, internal service wiring, tests, documentation, and mapping layers. These are low-ambiguity, low-risk, and well-bounded. The AI can own the execution.

AI-assisted tasks are where AI accelerates implementation but humans retain architectural judgment. Refactoring, bounded context changes, data model evolution, and API contract updates. The AI does the heavy lifting; a human signs off on the shape of the solution before the first line is written.

Human-led tasks are where AI is a support tool only. Security-critical logic, payment flows, compliance-sensitive code, core domain invariants, and cross-cutting architectural redesign. AI can research, draft, and suggest — but the execution decision remains entirely human.

This classification doesn't just improve safety. It makes sprint planning honest. Teams stop over-promising because they've conflated "AI can help with this" with "AI can own this." Those are different statements with different risk profiles.

Fig. 3 — Three-tier AI delegation model: honest backlog starts here

What Changes Inside Scrum Ceremonies

AI SDLC doesn't eliminate Scrum ceremonies. It extends them with the questions that AI participation makes necessary. Each ceremony gains a specific set of additions — not to add overhead, but to surface the information that keeps AI-assisted delivery reliable.

Backlog refinement adds: AI delegation level, structural scope, risk score, and invariant list. The output is a backlog that is honest about what AI can own versus what it can assist with.

Sprint planning adds: context pack preparation, AI/human work split, validation path, and approval gates. The output is a Sprint Execution Map — not just a sprint backlog.

Daily standup adds: what the AI generated, where it got blocked, where context was insufficient, and what needed human escalation. This surfaces structural problems in hours, not at the end of the sprint.

Sprint review adds: what percentage of delivery was AI-assisted, what acceleration was achieved, and what drift was prevented by the guardrails. This builds the evidence base for expanding AI delegation over time.

Retrospective adds: which task types are becoming AI-safe, where architecture was too ambiguous for reliable AI execution, and what patterns should be encoded back into A-Concept. This is the learning loop that makes the whole system improve sprint by sprint.

Fig. 4 — What each Scrum ceremony gains in an AI SDLC context

Where A-Concept Becomes the Structural Foundation

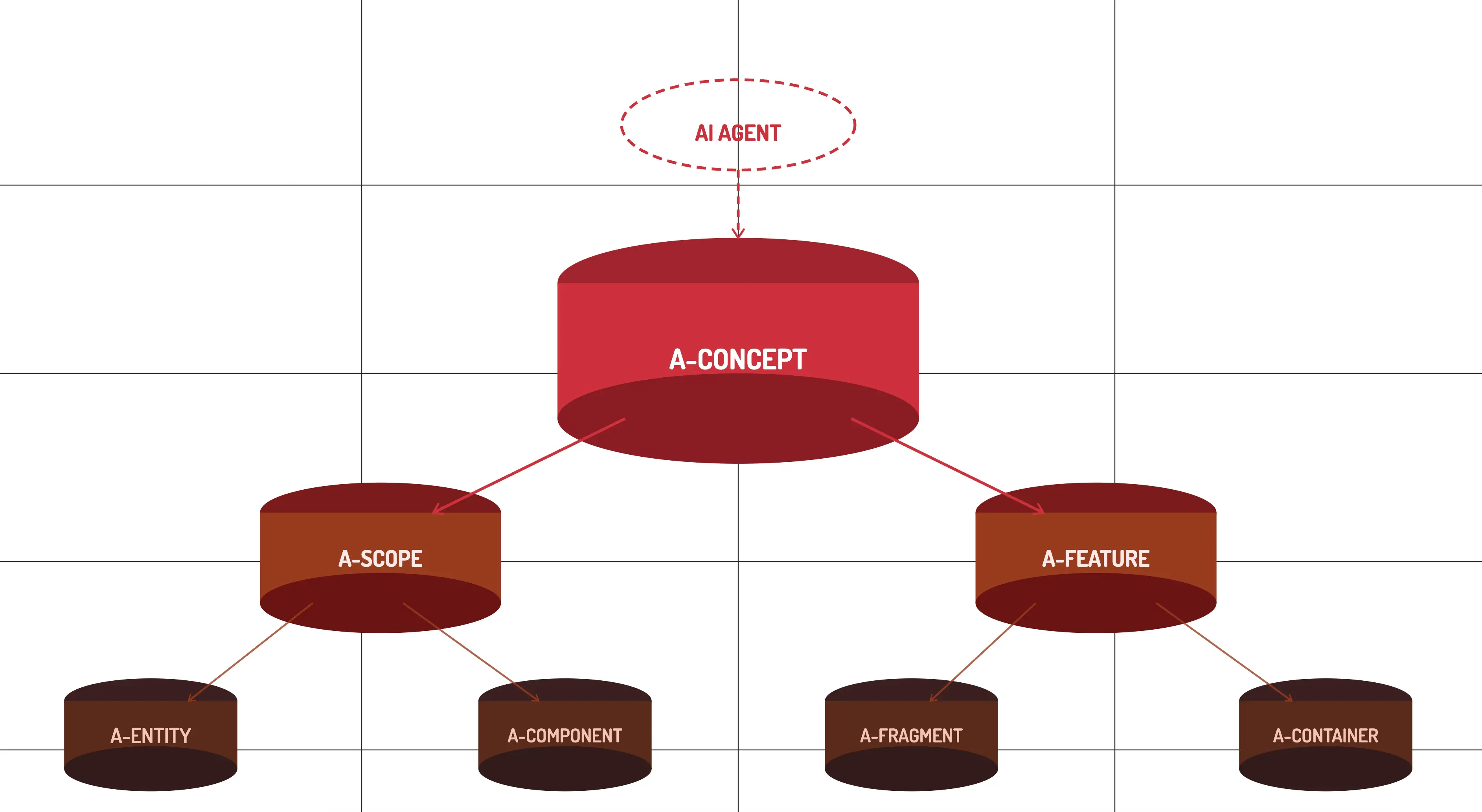

A-Concept is not just compatible with AI SDLC — it was built for it. The framework's primitive system provides exactly the structural vocabulary that AI execution requires. When a task is mapped to a specific A-Concept, A-Container, A-Component, A-Scope, A-Feature, A-Entity, or A-Fragment, the ambiguity that causes AI drift is largely eliminated.

A-Concept plays five distinct roles in an AI SDLC delivery model. As a structural context provider, it ensures the architecture is self-documenting — an AI model doesn't need to infer where responsibility lives, because it is encoded in the structure itself. As a delegation boundary enforcer, it allows teams to tell AI to work only within a specific Concept or Feature, and not to touch adjacent Components — and the framework makes that constraint enforceable, not merely advisory.

As an architectural vocabulary, it replaces vague prompts with precise structural instructions: "extend this Feature inside this Scope using this Entity pattern." The specificity makes AI execution deterministic. As a validation anchor, it allows teams to compare the expected structural shape of generated output against the actual result — catching drift before it reaches production. And as living documentation, it ensures the architecture can never drift from reality: the code structure is the spec, always current, always accessible to both human developers and AI systems.

This is the difference between a codebase that AI can extend reliably and one that AI merely autocompletes. The framework enforces the rules that prompts alone cannot.

Fig. 5 — The five roles A-Concept plays in AI SDLC delivery

The Role Nobody Is Talking About: The Scrum Master Becomes an AI Agent

There is a role in Scrum that is easy to overlook when discussing AI adoption — because it doesn't write code. The Scrum Master manages flow. They track what's in the backlog, understand the state of each item, surface blockers, maintain context across ceremonies, and help the team stay coherent from sprint to sprint.

That job description is, almost point for point, a description of what large language models are exceptionally good at — when given structured data to reason over.

Think about what a Scrum Master actually holds in mind at any given moment: the full backlog with priorities and dependencies, the history of decisions made on each story, the context of what changed last sprint and why, the team's velocity patterns, the open risks. Reconstructing and maintaining that context — across dozens of stories, across weeks of sprints — is cognitively expensive for a human and naturally tractable for an LLM with access to the right structured state.

In an AI SDLC model with A-Concept, the conditions for an AI Scrum Agent are met for the first time. The backlog isn't just a list of vague stories — each item is mapped to A-Concept primitives with explicit scope, invariants, and boundaries. The codebase is self-documenting, so the agent can observe the actual state of the system. Change history is traceable through the AI Delivery Log. The agent doesn't have to guess what was built — it can read the structural record.

This is the difference between an AI that can summarize Jira tickets and one that can genuinely manage delivery flow. The first is a search interface. The second requires the kind of structured, always-current architectural context that A-Concept is designed to provide.

This doesn't eliminate the Scrum Master role — it reshapes it. The cognitive load of context maintenance moves to the agent. What remains, and what becomes more important, is the human work that AI genuinely cannot do: reading team dynamics, navigating organizational politics, making judgment calls about when to slow down, building the kind of trust with stakeholders that only comes from human relationship. The Scrum Master becomes a delivery strategist. That is a better use of a person.

Fig. 6 — The Scrum Master role evolves: context management moves to the AI agent

Four Levels of AI Involvement: Where Is Your Team?

Not every team, feature, or company is in the same place. AI SDLC works across a spectrum of AI involvement, and the right level depends on the structural maturity of the codebase and the team's experience with explicit architectural contracts.

Level 1 — Human only. AI as a research and documentation tool only. Reserved for the most risk-sensitive logic: security, payments, compliance, core domain invariants. No AI execution, human review of everything.

Level 2 — AI-assisted coding. Humans design and architect; AI implements specific, well-bounded components under close review. Appropriate for most active product development. This is where most teams operating with AI tools today actually sit, whether or not they've named it.

Level 3 — AI-driven feature execution. Humans define scope, constraints, and validation criteria; AI handles the primary implementation within those boundaries. Achievable once the structural framework is mature and the team has built confidence through Level 2 experience.

Level 4 — AI-driven modernization. AI assists in migrating legacy codebases toward a structured architecture — incrementally, under human supervision. This is where A-Concept becomes particularly powerful: not just as a framework for greenfield development, but as a migration target that gives legacy modernization a clear direction and a measurable endpoint.

Naming the levels makes it possible to move intentionally. Most teams skip from Level 1 to Level 3 assumptions without having done the structural work that Level 3 requires — and then wonder why their AI-generated code needs so much remediation.

Fig. 7 — Four organizational levels of AI involvement in delivery

Conclusion

The problems AI SDLC addresses are not abstract. Every engineering organization adopting AI tools today is already encountering the failure modes described here — hallucinations rooted in missing architectural context, documentation that hasn't kept up with the speed of generation, sprint plans that don't account for the actual risk profile of AI execution. The questions are live. What is missing, in most cases, is a coherent structural answer.

A-Concept provides that answer at the architectural level. AI SDLC provides it at the process level. Together, they constitute something that Scrum alone cannot offer in an AI-augmented team: a delivery model where architecture, scope, and validation are made explicit enough for AI to participate reliably — and where the feedback from every sprint makes the system structurally smarter over time.

The teams that will compound advantage over the next several years are not the ones using the most AI tools. They are the ones who built the structural foundation that makes AI execution trustworthy at scale — before the debt from unstructured adoption made it expensive to course-correct.

For greenfield systems, the calculus is simple: the structural cost of getting it right from the beginning is always lower than the refactoring cost of getting it right later. For teams already carrying the weight of legacy systems, A-Concept offers a migration path — not a rewrite, but a gradual progression toward a codebase that AI can extend without guessing.

The A-Concept framework is available as an open-source npm package. Full documentation and architecture guides are maintained by the ADAAS R&D team. For an introduction to the framework's primitives and design philosophy, see A-Concept: The AI-Ready Framework Born from 5 Million Lines of Code.

Thinking About AI SDLC for Your Team?

Whether you're evaluating A-Concept for a new project or looking to introduce AI-assisted delivery into an existing team, our engineers can walk through the architectural tradeoffs with you. Book a technical session or reach out below.